|

The only difference here is that we pass the multiBlockArray we created earlier as the argument to how many blocks we want to run, and then proceed as normal. Once we got the space we need on our device, it’s time to launch our kernel and do the calculation needed from the GPU. HostArray, BLOCKS * BLOCKS * sizeof(int), As you can see, we take care of a two dimensional array, using BLOCKS*BLOCKS when allocating:ĬudaMalloc( (void**)&deviceArray, BLOCKS * BLOCKS * sizeof(int) ) Next, we allocate the memory needed for our array on the device.

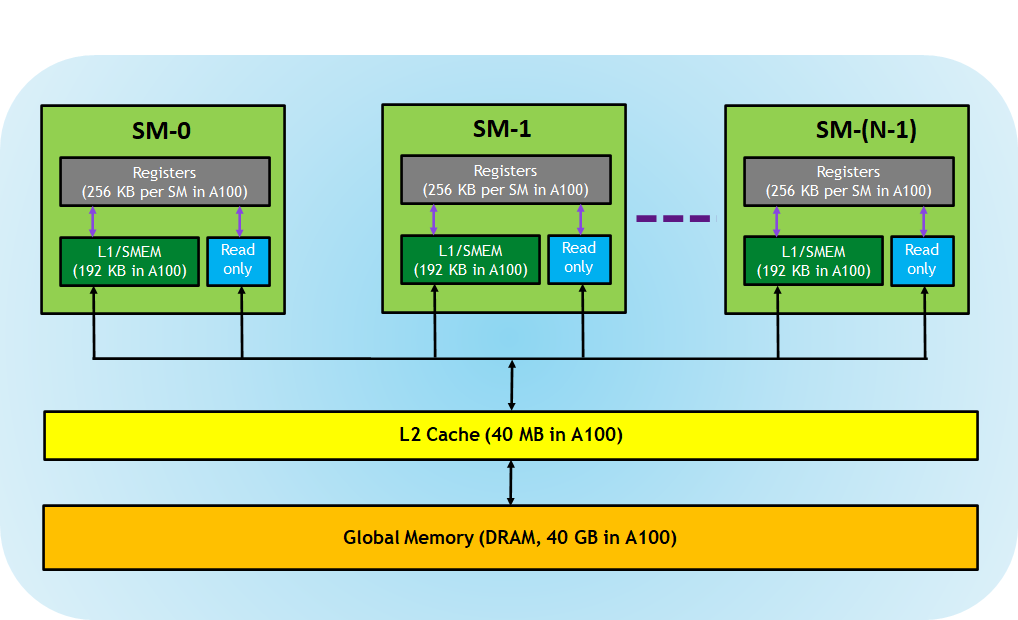

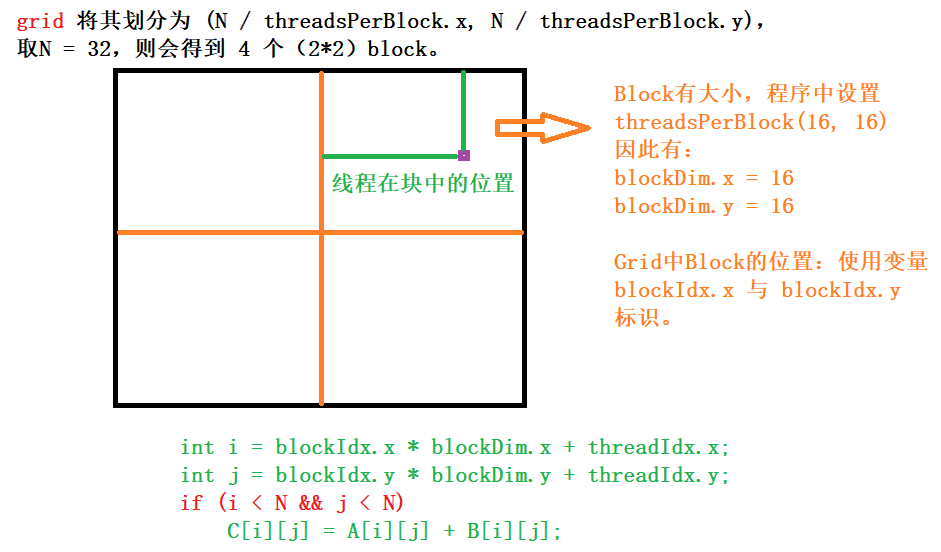

Then we define a 2d array, a pointer for copying to/from the GPU and our dim3 variable: So, why is it dim3? Well, in the future CUDA C might support 3d-arrays as well, but for now, it’s only reserved, so when you create the array, you specify the dimension of the X-axis, and the Y-axis, and then the 3rd axis automatically is set to 1.įirst of all, include stdio.h and define the size of our block array: How do we do this? First of all, we will need to use a keyword from the CUDA C library, and define our variable. Basically, it’s all the same as before, but we used multidimensional indexing. But since they are 2d, you can think of them as a coordinate system where you have blocks in the x- and y-axis. These types of blocks work just the same way as the other blocks we have seen so far in this tutorial. We will create the same program as in the last tutorial, but instead display a 2d-array of blocks, each displaying a calculated value. In this short tutorial, we will look at how to launch multidimensional blocks on the GPU (grids). 20, 2010.Welcome to part 5 of the Parallel Computing tutorial. number of threads in a block in the x dimension) Full global thread ID in x dimension can be computed by: x = blockIdx.x * blockDim.x + threadIdx.x Įxample - x direction A 1-D grid and 1-D block 4 blocks, each having 8 threads Global ID 26 threadIdx.x threadIdx.x threadIdx.x threadIdx.x 1 2 3 4 5 6 7 1 2 3 4 5 6 7 1 2 3 4 5 6 7 1 2 3 4 5 6 7 blockIdx.x = 0 blockIdx.x = 1 blockIdx.x = 2 blockIdx.x = 3 gridDim = 4 x 1 blockDim = 8 x 1 Global thread ID = blockIdx.x * blockDim.x + threadIdx.x = 3 * = thread 26 with linear global addressing Derived from Jason Sanders, "Introduction to CUDA C" GPU technology conference, Sept. ThreadIdx.x - “thread index” within block in “x” dimension blockIdx.x - “block index” within grid in “x” dimension blockDim.x - “block dimension” in “x” dimension (i.e. If want a 1-D structure, can use a integer for B and T in: myKernel>(arg1, … ) B – An integer would define a 1D grid of that size T –An integer would define a 1D block of that size Example myKernel>(arg1, … ) ħ CUDA Built-in Variables for a 1-D grid and 1-D block T – a structure that defines the number of threads in a block in each dimension (1D, 2D, or 3D).

Compute capability 1.0 Maximum number of threads per block = 512 Maximum sizes of x- and y- dimension of thread block = 512 Maximum size of each dimension of grid of thread blocks = 65535ĭefining Grid/Block Structure Need to provide each kernel call with values for two key structures: Number of blocks in each dimension Threads per block in each dimension myKernel>(arg1, … ) B – a structure that defines the number of blocks in grid in each dimension (1D or 2D). NVIDIA defines “compute capabilities”, 1.0, 1.1, … with these limits and features supported. Can be 1 or 2 dimensions Can be 1, 2 or 3 dimensions CUDA C programming guide, v 3.2, 2010, NVIDIAĤ Device characteristics - some limitations Linked to internal organization Threads in one block execute together. NVIDIA GPUs consist of an array of execution cores each of which can support a large number of threads, many more than the number of cores Threads grouped into “blocks” Blocks can be 1, 2, or 3 dimensional Each kernel call uses a “grid” of blocks Grids can be 1 or 2 dimensional Programmer will specify the grid/block organization on each kernel call, within limits set by the GPUĪllows flexibility and efficiency in processing 1D, 2-D, and 3-D data on GPU. These notes will introduce: One dimensional and multidimensional grids and blocks How the grid and block structures are defined in CUDA Predefined CUDA variables Adding vectors using one-dimensional structures Adding/multiplying arrays using 2-dimensional structures ITCS 6/8010 CUDA Programming, UNC-Charlotte, B.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed